The launch of Model Context Protocol (MCP) has sparked considerable debate amongst developers about whether it will replace traditional APIs.

Modern software relies heavily on APIs for communication between applications. But with the rise of AI agents and large language models (LLMs), a new protocol called MCP (Model Context Protocol) is changing how these systems interact with external tools and data.

After examining the technical specifications, real-world implementations, and performance benchmarks, the answer is more nuanced than you might expect.

This blog explains both technologies, showing when to use each and why comparing them might not be the right question.

Understanding Traditional APIs

Traditional APIs have formed the foundation of software integration for decades. They enable different systems to communicate through standardised protocols, following a request-response model where clients send requests and servers return responses.

REST APIs

REST (Representational State Transfer) operates on a stateless architecture where each request contains all necessary information. It uses standard HTTP methods like GET for retrieving data, POST for creating resources, PUT for updates, and DELETE for removing resources.

The stateless nature means servers do not retain client information between requests. Every request must include authentication credentials, relevant parameters, and all context needed to process it. This design makes REST APIs predictable and cacheable, which is why they power everything from payment gateways to social media platforms.

REST APIs excel in scenarios where you need a straightforward interface between your application and external services. When you call a REST endpoint, you receive a consistent response structure. This predictability simplifies error handling, testing, and maintenance.

However, REST has limitations. Multiple API calls are often needed to fetch related data, leading to the N+1 query problem. A mobile app displaying a user profile might make one call for user data, another for posts, and additional calls for comments on each post. This creates latency issues, particularly on slower networks.

SOAP APIs

SOAP (Simple Object Access Protocol) uses XML-based messaging with strict protocols. It was designed for enterprise environments where formal contracts and bulletproof reliability matter more than simplicity.

SOAP APIs include built-in error handling through standardised fault messages. They support ACID transactions, making them suitable for financial systems where a failed operation must roll back completely. The protocol includes WS-Security specifications for message-level security, which is why banks and healthcare systems still rely on SOAP.

The trade-off is complexity. SOAP requires more bandwidth due to XML verbosity. Implementation demands understanding WSDL (Web Services Description Language) files and handling complex XML schemas. Modern developers often find SOAP unnecessarily complicated for typical web services.

GraphQL

GraphQL addresses REST’s over-fetching and under-fetching problems by letting clients specify exactly what data they need. Instead of multiple REST endpoints, GraphQL exposes a single endpoint where clients send queries describing their data requirements.

A mobile app can request a user’s name, last three posts, and total follower count in one query. The server returns precisely that data, nothing more. This reduces network requests and bandwidth usage, particularly valuable for mobile applications.

GraphQL introduces complexity through its query language and resolver functions. Servers must implement resolvers for each field, adding development overhead. Performance optimisation requires careful attention to query depth and complexity to prevent expensive operations.

gRPC

gRPC, developed by Google, uses HTTP/2 for transport and Protocol Buffers for serialisation. It excels at high-performance communication between microservices.

Protocol Buffers are a binary serialisation format, significantly smaller than JSON. This reduces bandwidth usage and serialisation overhead. HTTP/2 enables multiplexing multiple requests over a single connection, reducing latency in distributed systems.

gRPC supports bidirectional streaming, allowing clients and servers to send multiple messages back and forth. This makes it excellent for real-time communication between services. The downside is limited browser support and a steeper learning curve compared to REST.

What is Model Context Protocol?

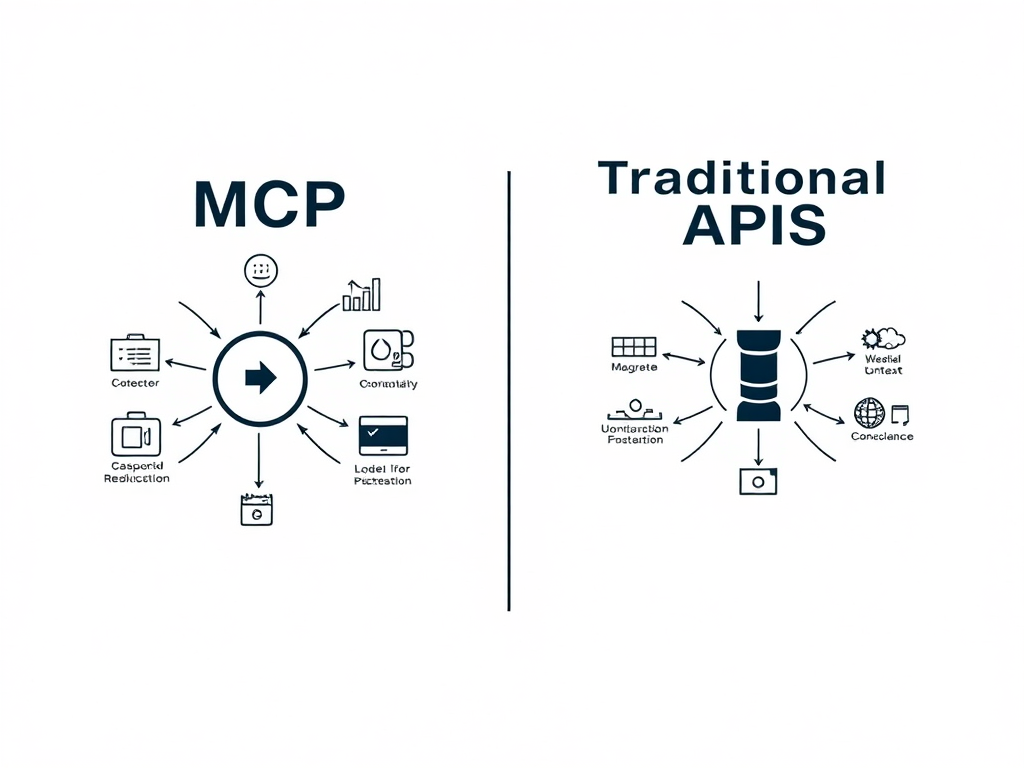

Model Context Protocol (MCP) is an open standard introduced by Anthropic in November 2024. It addresses a specific problem that traditional APIs were not designed to solve, enabling AI models to discover and interact with external tools and data sources dynamically.

The Problems MCP Solves

Before MCP, connecting AI models to external data required custom integrations for each data source. If your AI assistant needed access to Google Drive, GitHub, and a database, you would write three separate integration layers. Each integration required authentication logic, error handling, and updating code whenever the external service changed its API.

MCP standardises this process. Instead of writing custom integrations, you implement MCP servers that expose your data sources through a unified protocol. AI models can discover available servers, understand their capabilities, and interact with them without hard-coded logic.

How MCP Actually Works

MCP follows a client-server architecture where an MCP host (an AI application like Claude Desktop) establishes connections to one or more MCP servers, with each MCP client maintaining a dedicated one-to-one connection to its corresponding server.

The architecture has three layers:

- MCP Hosts: Applications that want to access external context. Claude Desktop, for example, acts as an MCP host. The host creates MCP clients that connect to various servers.

- MCP Clients: Protocol clients created by hosts to communicate with servers. Each client maintains a persistent connection to one server, handling message exchange and managing the connection lifecycle.

- MCP Servers: Lightweight programmes that expose specific functionality through the MCP protocol. A filesystem MCP server might expose tools for reading and writing files. A database server might provide query capabilities. A web search server could offer search functionality.

The Model Context Protocol uses a client-server architecture partially inspired by the Language Server Protocol (LSP), which helps different programming languages connect with a wide range of development tools.

The protocol defines three core primitives:

- Resources: Data or content that servers expose to clients. A filesystem server exposes file contents as resources. A database server exposes query results. Resources have URIs for identification and can include text, binary data, or structured information.

- Tools: Functions that models can invoke to perform actions. A GitHub server might expose tools for creating issues, reviewing pull requests, or searching code. Tools have schemas describing their parameters and return values.

- Prompts: That provide to guide model interactions. A prompt might include context about how to use certain tools effectively or structure queries for best results.

Dynamic Discovery

Unlike traditional APIs where you must know endpoints beforehand, MCP supports runtime discovery. When a client connects to a server, the server advertises its available resources, tools, and prompts. The client (and the AI model using it) learns what capabilities exist without prior configuration.

This dynamic discovery means you can add new MCP servers to your setup without modifying client code. The AI model automatically discovers and learns to use new capabilities as they become available.

Transport Layers

MCP operates over two transport mechanisms:

- Standard Input/Output (stdio): Where servers run as local processes communicating through stdin/stdout. This works well for local integrations where the server and client run on the same machine.

- Server-Sent Events (SSE) over HTTP: Enabling remote servers accessible over networks. This allows distributed deployments where MCP servers run on different machines or even different data centres.

MCP vs Traditional APIs: Real-World Use Cases

| Feature | Traditional APIs | MCP (Model Context Protocol) |

|---|---|---|

| Core Function | Enable direct, predictable communication between two specific systems. | Enable an AI model to dynamically discover and interact with multiple systems and tools. |

| Performance | Optimized for low-latency, single-step operations. Faster for direct requests (e.g., payment processing). | Optimized for multi-step AI workflows. Reduces overall task completion time by cutting integration overhead. |

| Flexibility | Protocol-specific by design (e.g., REST, GraphQL). The client must conform to the API’s protocol. | Acts as a universal abstraction layer. The AI client uses one protocol to access diverse data sources. |

| Scalability | Stateless architectures (like REST) scale horizontally with ease to handle high volumes of requests. | Scales by adding new data sources without client code changes. |

| Implementation | Simpler initial setup due to mature frameworks, extensive documentation, and widespread developer familiarity. | Much Easier but initially requires setup, requiring an understanding of a newer protocol, connection management, and dynamic discovery. |

| Security Model | Relies on mature, well-understood security patterns like OAuth 2.0, API Keys, and RBAC. | Introduces novel, AI-specific risks like Prompt Injection, Token Theft, and privilege escalation. |

| Best Use Case | Building stable, performance-critical integrations for web/mobile apps, microservices, and third-party services. | Building AI-first applications, agentic workflows, and development assistants that need access to a changing set of tools. |

Future of APIs: From Endpoints to Context Protocols

For years, APIs meant fixed endpoints, clear routes, and defined requests. REST and SOAP made it simple for one system to talk to another in a predictable way. That approach worked well when applications were static and followed a set pattern.

Today, the way software communicates is shifting. AI systems and large language models do not follow fixed workflows. They need to access data and tools dynamically, based on what they are trying to achieve at that moment. This has led to a gradual move from endpoint-based communication to context-based interaction, where intent guides the connection.

Model Context Protocol (MCP) brings this shift into practice. Instead of developers building separate integrations for each service, MCP allows an AI system to discover available tools and data sources on its own. Once an MCP server is connected, the model can understand what actions are possible and use them directly.

In the next few years, API development will likely move in three clear directions:

- Context-aware interfaces that adapt to a model’s intent rather than fixed routes.

- Hybrid architectures that combine REST or GraphQL for structured operations with MCP for flexible, AI-driven tasks.

Traditional APIs are not disappearing. REST, GraphQL, and gRPC will still handle core business processes where consistency and reliability matter most. MCP will complement them by managing AI-based workflows that need adaptability and contextual awareness.

The future of APIs is not a replacement but an expansion. Traditional APIs will continue to provide stability, while protocols such as MCP will add intelligence and flexibility to modern applications. Together, they will shape how software systems communicate in the years ahead.

Conclusion

Every digital product today depends on APIs. From a payment gateway to a CRM connection, APIs move data and trigger actions across systems.

However, traditional APIs were built for human developers, not for AI systems that can reason, plan, and execute.

This shift is where MCP steps in. Designed by Anthropic, it gives AI agents a standard protocol to discover and use tools — just as browsers use HTTP or devices use USB-C.

When evaluating MCP vs traditional APIs, the question isn’t “which is better,” but which architecture fits your goal: predictable integrations or intelligent automation.